Better cities

with CityCam

Building hardware and software for energy-efficient

and highly autonomous traffic cameras

Keeping up with the technological evolution, Mad Devs undertook the development of a new-generation intersection monitoring system for a company called CityCam. The system’s purpose is to let anyone monitor traffic in a city in real time. Traffic monitoring can help prevent congestion, and overall, it makes transportation safer and faster. The project, known internally as Microphotographer, was largely about hardware and featured two major parts: engineering the camera device with backend for it and building an application with frontend and backend.

Why cities need systems like CityCam

If traffic is poorly organised, virtually any process in a modern city’s life can come to a halt. Around the world, the most advanced technologies are applied to urban traffic to make sure that hundreds of thousands of people and vehicles moving around simultaneously can do it safely and efficiently.

Traffic cameras are one of the solutions that allow making better transportation decisions in real time. If you can monitor roads and intersections, you can plan itineraries optimally, whether you’re just a resident driving around or an official in charge of traffic management. Consider the benefits of traffic cameras for three potential categories of users.

Providing these benefits takes cutting-edge technological solutions. The cameras need to require minimum energy supply and function continuously without frequent external inputs. It was a serious challenge to build them that way.

Challenges & solutions

The project’s two main ambitions were low energy use and high load capacity. To pursue the former, we engineered a device that could take photos and send them to the server every three seconds while using under one watt of electricity. A high-load service we were simultaneously building, in turn, needed to save and transmit images to the user app and the customer’s website. The general flow of the system went like this:

- Cameras send images to the cameras’ backend

- The cameras’ backend sends images to Amazon S3

- The cameras’ backend supplies information about images sent to S3 and data about devices from the cameras (battery charge and SIM card balance)

- The progressive web application (PWA) backend goes to S3 to get presigned links for images that have just been uploaded

- The PWA backend sends links to images stored in S3 to users’ devices

- Users’ devices send requests to S3 to get images according to presigned links

Building a prototype

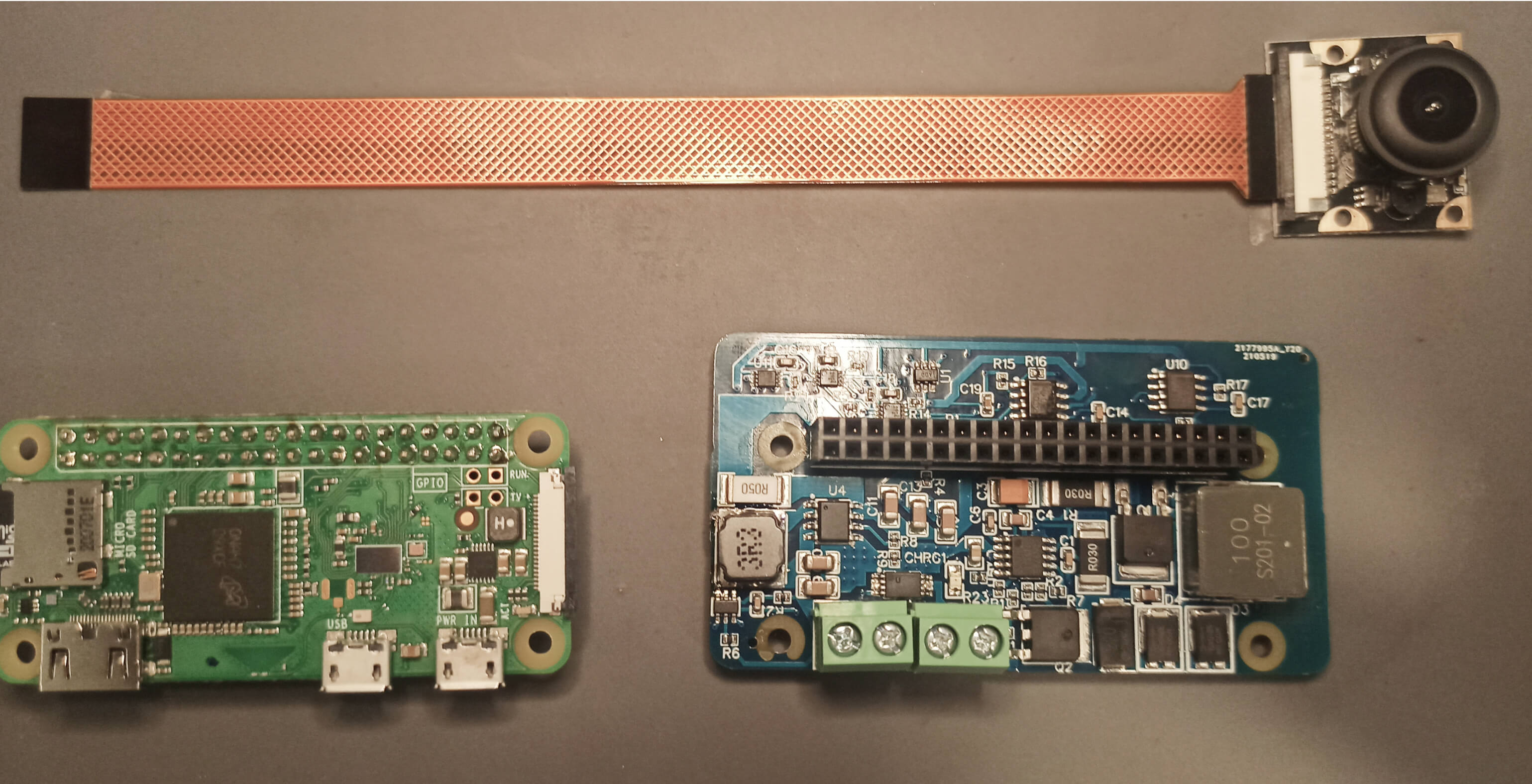

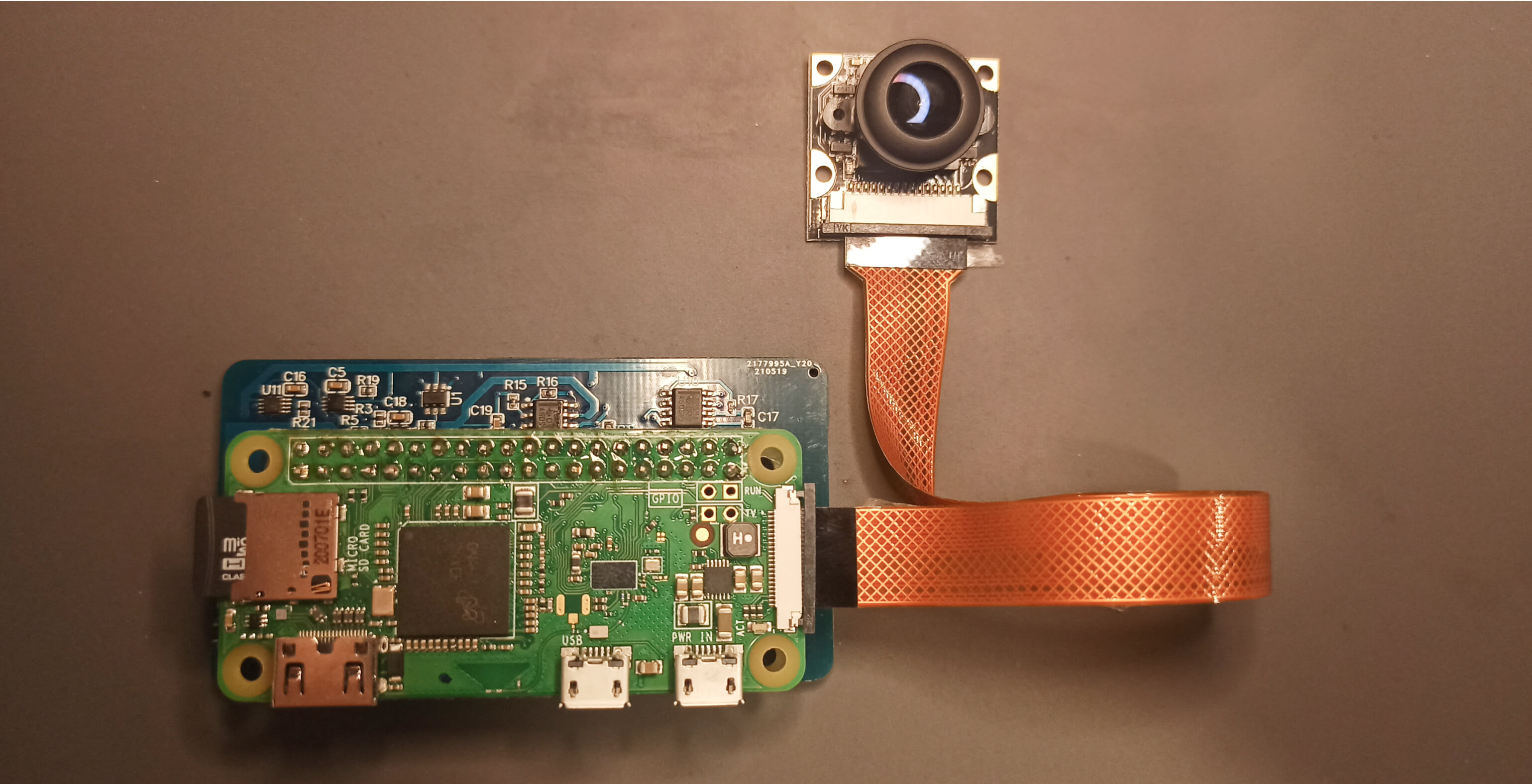

Prototyping the camera device was a matter of finding the most advanced solutions that the modern hardware industry has to offer. We went through a long trial and error process, constantly updating our plans and trying out yet better alternatives in each iteration. The early test prototypes were engineered using a module-based system:

Low efficiency of power supply was a major challenge that we needed to focus on and overcome. The device’s initial version featured double conversion of energy where linear regulators first converted energy from power banks (+5 V) to make it fit for charging the Li+ accumulator (3.7 V–4.2 V), and then energy went back up to 5 V to power the periphery of the device. Conversion was carried out by a pulsed DC/DC converter with a large output ripple value, so the operation of the controller and the periphery of the device was unstable.

Reimagining power supply

We refactored the devices’ hardware and software components to move on to a new concept of power supply. We got rid of double conversion to save up energy, and we also left linear conversion behind to increase efficiency.

We devised a concept of uninterruptible power supply from power banks with instant switching to a backup Li+ battery. As a result, energy consumption was halved.

We further gave up ready-made modules and started making our own boards: this was a way to reduce costs, simplify the circuitry, and introduce more flexibility in the development of the device.

Active components of the device

- Controller module based on an STM32L152RBTA microcontroller: operates and commands other modules.

- Modem module based on a sim5360e chip: connects the device to the mobile network and the Internet and transmits images taken by the cameras to the cloud data storage.

- Arducam Mini Module Camera Shield 2 MP Plus OV2640: takes images at a specified frequency.

- DC/DC upconverter (step-up) based on the IP5306 microcircuit: stabilises the input current for the correct operation of the device.

- TP4056 micro USB charge controller: checks if the power bank is discharged.

- In-house engineered printed circuit boards for the modules: consolidate all modules into one device.

Board tracing

Continuously perfecting the device

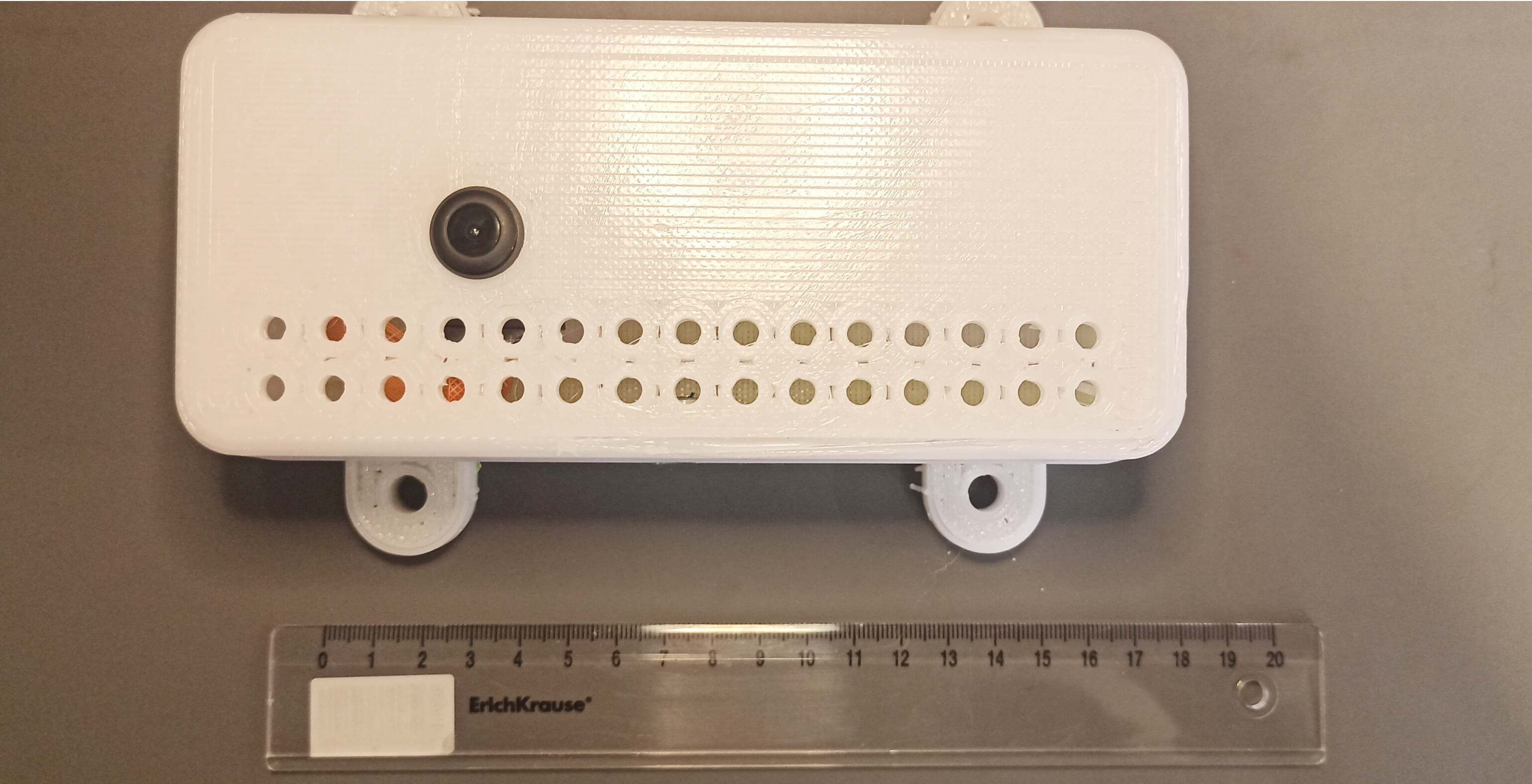

Later versions of the device featured video streaming with a resolution of 320×240 to 1920×1080, 32px step, a framerate of 1 to 30, and automatic night vision. Low power consumption still was our main achievement: the device uses less than 300 mW in the sleep mode and less than 1.5 W in the active mode.

Another major strength of the revised device was the use of solar energy. We added an adjustable solar charge controller with MPPT: the default voltage is 18 V, and the charging current can go up to 4 amps. In a sunny place, thanks to its +20 W solar cell and +15 Ah battery, the device can work 24/7 with no charge in the active mode.

Software components

The project featured several levels of complexity: focusing on hardware engineering, we also needed to take care of the software part. We built an application—backend and frontend—to enable end users’ access to traffic monitoring. The app’s major strength was its payment system module: we made it easily scalable and capable of effortless integration of new payment systems.

To ensure smooth and efficient communication between the cameras and their backend, we built an original protocol on top of the transmission control protocol (TCP). We made server deployment simple so that it required minimal human involvement: the whole project could be deployed in just a few clicks.

We made infrastructure deployment simple so that it required minimal human involvement. In fact, you can now click a couple dozen buttons, and the whole project is deployed.

For the frontend part, we used a PWA: a rather recent technology that makes the project’s website in many ways akin to a regular mobile application. PWAs combine the benefits of web apps and websites as they, for example, both are indexable by search engines and offer dynamic data access, even when the users are offline.

Ongoing progress

Our recent brave move in perfecting the device was to shift from microcontrollers to microprocessors as the latter offer wider opportunities. The prototypes and the first batch were based on Raspberry Pi Zero with a Huawei 4G modem as the transceiver and an OV5640 camera via the MIPI interface. The combination proved to be as energy-efficient as the previous version of the device.

The power module adds three important functions to the RPi. First, we now can use LiFePO4 accumulators as the power source. Second, we can quickly charge (4A) these accumulators using solar panels at their highest achievable efficiency (MPPT control). Third, we enabled a neat metric collection system that monitors the entire device’s energy consumption, the accumulator’s charge level, and the solar activity.

We continue to elaborate this traffic monitoring system. We hope that the meticulous work of our hardware and software engineers will ultimately result in better traffic for everyone.

Technology stack

Python

Django

MinIO

Go

PostgreSQL

React

Celery

Prometheus

Grafana

C

STMicroelectronics

Texas Instruments

ARM

EasyEDA

JLCPCB

WEBANCH Power Designer

Corel Draw

Topor

SOLIDWORKS

Altium Designer

Meet the team

Belek Abylov

Full-Stack Developer

Vlad Andreev

CQO

Kirill Avdeev

Backend Developer

Aleksandr Edakin

Backend Developer

Maksim Gachevski

Frontend Developer

Gennady Karev

Full-Stack Developer

Oleg Katkov

Backend Developer

Dmitri Kononenko

Project Manager

Anton Kozlov

System Developer

Andrew Sapozhnikov

CIO

Gaukhar Taspolatova

Project Manager

“The Mad Devs specialists aren’t afraid of challenges and are able to combine both hardware and software skills. This is what was needed for us. We also appreciate the professional approach to the work and constant communication. ”