One day I got a cool task to improve the accuracy of positioning and distance calculating based on GPS data on Android devices for the taxi service application.

After some research, I found that different GPS-receivers produce different errors. The accuracy of the GPS point can be influenced by buildings, big trees, various weather conditions, and the configuration of the satellites. GPS gives nice values to detect a one-time position, but its accuracy is not enough when using a stream of GPS data to calculate the distance and cost of taxi-services. The received data is not suitable due to the error accumulation. The error accumulation may lead to 400% of overpricing and to customer's dissatisfaction as the result.

Therefore, at first, the problem should be solved on Android devices. Most drivers use Android smartphones because of its low price. The next step is to implement some online services for that.

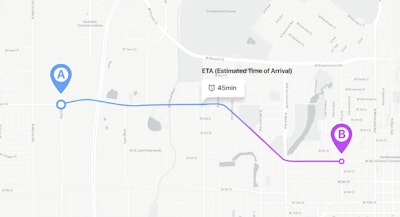

The short and simple description of the project is here.

A brief search has shown that one of the most reliable methods to increase positioning and distance calculating accuracy is to create a vector map of the roads and to project GPS coordinates to the nearest road like it’s implemented here. In addition, some graph-based checks should be implemented. This method might be suitable if there was a map of road coordinates. Yet the collection of such information for the map is very difficult and resource-consuming, therefore, we cannot use this method. We could use Google Maps Roads API, but that service has a limitation — a maximum of 2500 requests per day. Also, some phones don’t support google play (yes, they do exist).

Formulation of the problem

Since we do not have a reliable database with road coordinates, we need to get better GPS coordinates programmatically. It means that we have to implement an algorithm to solve the following issues:

- The false increase in distance when a car is not moving;

- Sharp “jumps” to a point remote from the real route for significant distance (about 500 meters);

- Short-term loss of GPS signal;

Herewith, the algorithm should not consume a whole battery within 3 minutes as well as all available RAM. What is more, it should not accumulate coordinates, but the process only several previous and current coordinates instead.

The next research has shown that the most common solution for the issues of positioning and noise is the Kalman filter. There are a lot of articles about it on the web, so I will not explain how it works. Our goal is to provide a pragmatic solution, without a ton of theory.

So, here is a question: what can provide information about object position except for GPS?

- We could use wi-fi points, but there are not many free wi-fi points in our city;

- We could also use GSM towers, but again, it is necessary to collect information and create a map of such objects (wi-fi and gsm towers);

In addition, those sources do not provide precise results. To get more accurate results we need information about the motion characteristics like velocity and direction. The first google request returned that modern smartphones have a bunch of sensors such as an accelerometer, magnetometer, and gyroscope. It means that theoretically, we have one more source of position data. Besides, we need to know what do those sensors do and how to use them.

Accelerometer

An accelerometer is a device that measures proper acceleration. It’s a very simple device that, in simple words, knows where the Earth is (doesn’t work in free fall). Conceptually, an accelerometer behaves as a damped mass on a spring. When the accelerometer experiences an acceleration, the mass is displaced to the point that the spring is able to accelerate the mass at the same rate as the casing. The displacement is then measured to give the acceleration. There is a similar principle in mems-accelerometer — but the capacitor used as mass.

The accelerometer could be used for acceleration measurement and for angle determination (as inclinometer). Sometimes it is hard to interpret accelerometer data. Let’s say, we have an X-axis acceleration. Does it mean that we have acceleration or it is caused by the incline of a device? In the simplest case, we can check Z-acceleration, yet real situations are more complex. The main problem here is that the sensor gives relative accelerations and we cannot link it with GPS data.

Another problem is the noise. We can use the “Acceleration explorer” application to observe the noise and ways to compensate it. We can use a low pass filter, moving average, median filter, or some other algorithms to compensate for the noise. Yet it leads to other errors and slow filter reaction.

We could also use Kalman’s filter to solve this issue, but in this case, we should know the standard deviation of an accelerometer. We can get standard deviation from the datasheet (in embedded systems for example), yet we don’t know which accelerometer is used in an abstract smartphone so we should calculate this value during the calibration step.

Gyroscope

Gyroscope is a device used for measuring or maintaining orientation and angular velocity in an inertial system.

Knowing the initial position of the device in space, you can find its current position by integrating the gyro readings. However, integration leads to “drift” that have to be compensated somehow.

Magnetometer

A magnetometer is an instrument that measures the magnetic field’s characteristics. In other words, this sensor always knows where is North.

However, it’s not as simple as we could expect. There is something called “magnetic declination” — the angle between magnetic North and true North. The National Geospatial-Intelligence Agency (NGA) provides source code written in C that is based on the World Magnetic Model (WMM). The source code is free to download and includes a data file updated every five years to account for the movement of the magnetic north pole. But we don’t have to worry about this because there is a special method for this in Android.

Determining the orientation of the phone in space

Now we have 3 sensors that allow us to determine the unique position of the phone in space. We can express it as a rotation matrix. We also can get the vector of relative accelerations.

In order to obtain acceleration in the “absolute” coordinate system (associated with the Earth), simply multiply the vector of the obtained acceleration by an inverted rotation matrix. Let’s say, we already have rotation matrix R from some AHRS (see below) and our device isn’t moving (laying on the table). Then our acceleration vector A should look like this: [Ax Ay Az].T() (transposed). So multiplication R*[0 0 1].T() should give us vector A. It’s a good AHRS algorithm check, however. [0 0 1].T() is a vector of accelerations in “absolute” coordinate system here. If R*[0 0 1].T() = A then we should be able to get [0 0 1] from known R and A. There is no division operation with matrices, but we can multiply one matrix with an inverted second one. So our equation is [0 0 1] = Rinv*A. It’s better to use OpenGL for these operations.

The result is an acceleration vector in the “absolute” coordinate system : [Aeast, Anorth, Aup].

There are many methods to determine the position of the device in space based on the data from an accelerometer, magnetometer, and gyroscope. Some of them can be viewed in this application:

In my opinion, Android rotation vector-based and Madgwick AHRS based are the best tools, since they are easy to implement and work fast.

Android virtual sensors

Android developers have done a great job to improve sensors, so we don’t need to implement really complex sensor “fusion” algorithms. We can just use already made a LINEAR_ACCELERATION sensor for linear accelerations (excluding gravity force) and ROTATION_VECTOR sensor for device orientation. The rotation vector sensor uses the Kalman filter. See https://android.googlesource.com/platform/frameworks/native/+/master/services/sensorservice/Fusion.cpp for example.

Madgwick filter

Madgwick filter is open-source software designed primarily for the low computing power of the target system. It uses the accelerometer, gyroscope, and (optional) magnetometer readings as inputs and produces quaternion describing its orientation in the space. It works really fast and almost doesn’t consume resources, but it’s hard to define filter coefficients. The first coefficient is data readings frequency. It’s easy to define it in embedded systems, but not so easy in Android. We can define minimum frequency value, but the real frequency can be much higher. We have to calculate it before using the Madgwick filter. The second coefficient is a gain coefficient that should be specified for each device individually. However, it is really fast and easy to implement the filter.

Kalman filter

All preparatory steps are done. Now we have an acceleration vector in the “absolute” coordinate system and we can implement Kalman filter.

The Kalman filter is an effective recursive filter that estimates the state vector of a dynamic system using a series of incomplete and noisy measurements. It’s named after Rudolf Kalman.

The implementation of the filter itself is not very complicated. It is necessary to define and implement several operations with matrices and simply follow the formulas from Wikipedia. There are many ready libraries (even in openCV there is an implementation of this filter), but you can implement it yourself to understand better how it works. The main task is to define the state vector of the system, the transition matrix, the control vector, and other components of the Kalman filter. One of the most difficult tasks is to define the covariance noise matrix of the process and measurement noise.

Our solution is fully based on the law of uniformly accelerated motion in matrix form since we have 2 directions — North and East. In this form, it is quite easy to extend filter so that it takes into account the acceleration up, but for now, it is not necessary.

Filter parameters

System state vector

X and Y are coordinates here. X’ and Y’ are velocities here (first derivatives).

State-transition model

Control-input model

Control vector

Observation model

The observation model maps the true state space into the observed space. In our case, it equals to identity matrix because we can get everything from GPS receiver (speed and coordinates) and we don’t need to convert it somehow. If the receiver can’t get information about speed then the observation model changes to this:

The second one shows the better result with a short-term loss of the GPS signal because the state vector is not affected by the last received speed from the receiver.

Covariance of observation noise

if receiver doesn’t provide information about speed. In another case:

These values could be received in different ways. You can parse NMEA message and use HDOP value. Also, you can use Location class getAccuracy() method. We should prepare speed for using in our coordinate system. For this we could use course component from NMEA message or getBearing() method from Location class. Here the assumption is made that the speed X’ does not affect the coordinate X in any way, because they are obtained in different ways. Similarly, the coordinate Y and the velocity Y’ has no effect on the velocities and coordinates of X.

Covariance of process noise

When forming the matrix, it was assumed that the error of the accelerometer along the X axis does not affect the error along the Y axis in any way.

In order for the Kalman filter to work, all data had to be brought to a single coordinate system. The following article is taken as a basis: https://en.wikipedia.org/wiki/Great-circle_distance. The point is that all coordinates were translated at distances from the point with coordinates 0, 0.

GeoHash

GeoHash — an algorithm for converting 2 coordinates of the form 37.571309, 55.767190 (longitude, latitude) into 1 string. It is similar to the binary search algorithm and works very fast, almost without spending computing resources.

In this project, it is used to solve 2 problems. First, it is necessary to somehow merge the nearby points to reduce the flow of redundant information. To do this, you can select a radius and merge all the points that fall into the circle with this radius. But how to choose the radius and center of this circle? Calculating the distance in spherical coordinates is a costly operation, so a GeoHash function is used to “join” fast coordinates. This function produces a hash as a string, the length of which is determined by the user and affects the encoding accuracy. The longer the hash length is, the smaller the region is and the greater accuracy of the coordinates is in one region. The maximum possible length of a GeoHash string is 12 characters. A very good result for our task shows hash with 7 and 8 precision.

Another way to use this function is “jumps” filtering. We will assume that if the GPS receiver has given out more than 3 (user-defined count) coordinates with one hash, then this point is correct and it should be taken into account. If less — then, probably, this is some random value that does not need to be taken into account.

Without GeoHash filter

GeoHash filtered route. Precision = 8, min. count of points with one geohash = 2

GeoHash filtered route. Precision = 7, min. count of points with one geohash = 3

As you can see from the examples above, the GeoHash filter allows you to greatly reduce the number of points processed. This can be critical if the coordinates are to be processed by the server or if you plan to save routes in the database.

Results and further work

As a result, we wrote an Android module and a C library that implements all of the above filters. Visually routes became smoothed and calculation error was reduced.

Red circles are GPS coordinate. Blue circles — filtered values. There were GPS connection loss and route was restored.

The difference in the processing of noise and jumps. On the screenshot below left — used solution (red marked route, which will be accounted for by the application, green — jumps, and jamming of the GPS receiver). On the right, it is the famous taxi service.

Last and the showiest example of filters applying:

“Jumps” are between 10–250 meters. This is the real route with x10 speed.

Use and develop

Link to the repository :

For questions and improvement suggestions please create issues in our repository (see link above).

This solution is distributing under MIT license. Use it and don’t forget to place copyrights :)

References

https://www.youtube.com/watch?v=C7JQ7Rpwn2k&t=1856s — coolest report about sensor fusion.

http://x-io.co.uk/open-source-imu-and-ahrs-algorithms/ — everything related to Madgwick AHRS with implementation.

https://alexanderpacha.files.wordpress.com/2017/05/masterthesis-pacha.pdf — very interesting article with great quaternion description, augmented reality, and orientation algorithms.

Tech Stack of Prominent Companies: What Are Industry...

Tech Stack of Prominent Companies:...

Tech Stack of Prominent Companies: What Are Industry Giants Using to Power Their Applications?

Netflix vs. Hulu, Hubspot vs. Salesforce, Spotify vs. Pandora, Databrick vs. ByteDance, Canva vs. Miro, and Uber vs Lyft are the rivalries that...